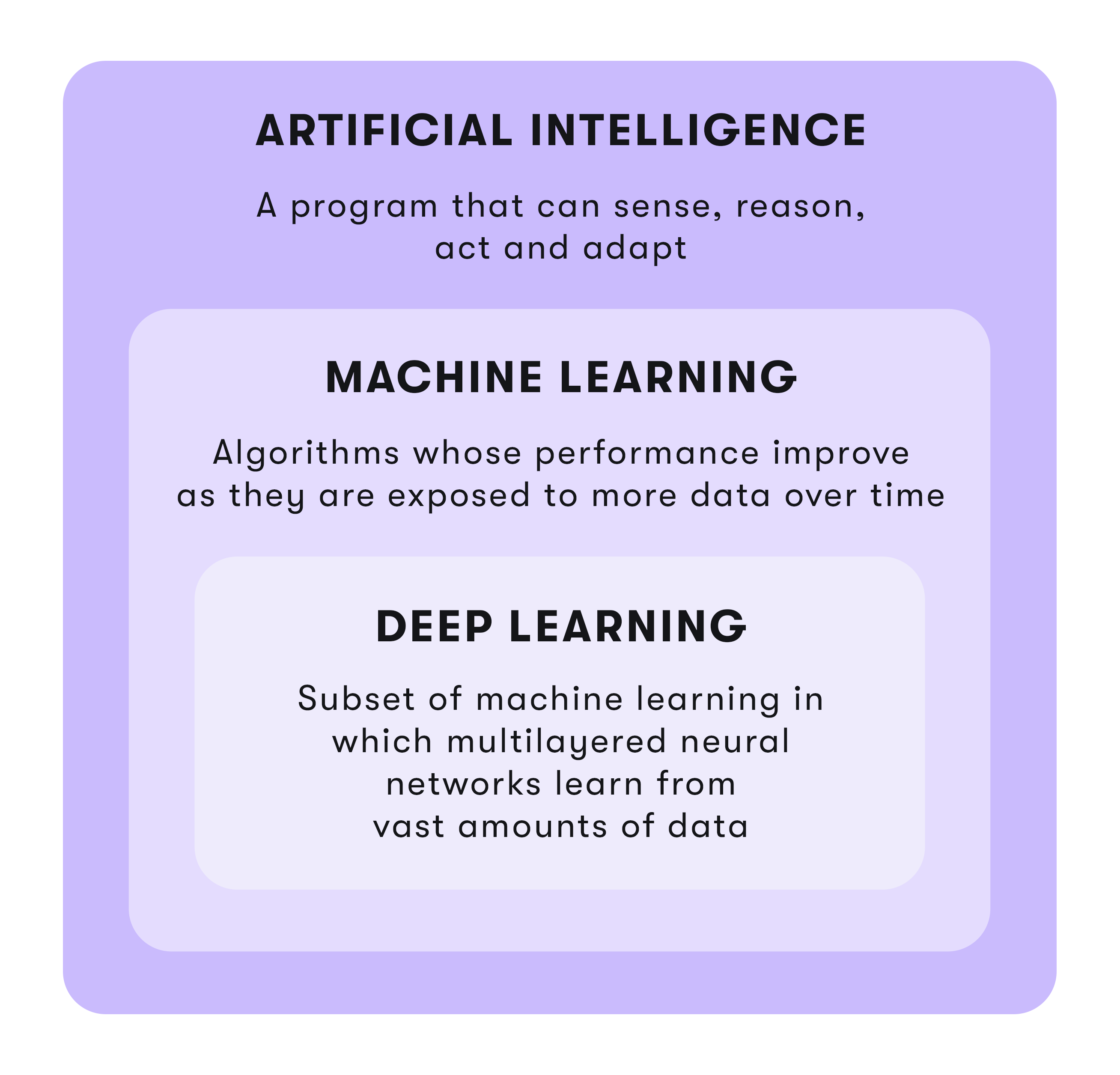

Artificial intelligence (AI) is a science that aims to make computers think and act like humans. But it's not as simple as it sounds. No computer can yet match the complexity of human intelligence. The key to this goal lies in machine learning (ML) and deep learning (DL). That's because these two technologies are able to analyze large amounts of data and make decisions and predictions based on it with as little human intervention as possible.

However, this basic statement often leads to the terms Deep Learning and Machine Learning being used like interchangeable buzzwords in the AI world. This is not the case, however. It is therefore all the more important to take a closer look at the two terms.

What is Machine Learning?

Machine Learning - a term coined by Arthur Samuel in 1959 '- is a subset of Artificial Intelligence. It is designed to enable computers to perform tasks without explicit programming. To do this, ML uses algorithms to recognize and interpret patterns in data, learn from them, and make the best possible decisions or predictions based on these insights. In general, the learning process of these algorithms can be either supervised or unsupervised, depending on the data with which the algorithms are fed.

How does Machine Learning work?

Typically, computers are fed structured data, such as examples, experiences, or instructions, to look for patterns in data and automatically learn to better analyze the data over time without human intervention or input to make better decisions and predictions in the future. ML algorithms use statistics to find patterns in vast amounts of data. In this process, anything that can be stored digitally can be fed as data into an ML algorithm.

Machine learning algorithms are often categorized as supervised or unsupervised.

What is Supervised Learning?

Supervised learning is a subset of machine learning that requires ongoing human involvement - hence the name "supervised." The computer is fed training data and a model explicitly designed to teach it how to respond to the data. The supervised learning model has a set of input and output variables. An algorithm identifies the mapping function between the input and output variables.

Specifically, in this case, "supervised" means that the algorithm is corrected each time to optimize the result. The algorithm is trained over the data set and modified until it reaches an acceptable level of performance. In this process, the algorithm is refined until it is able to accurately process new data sets that follow the learned patterns. In semi-supervised learning, the computer is fed a minimal amount of labeled data and a large amount of unlabeled data and searches for patterns on its own.

What is Unsupervised Learning?

Unsupervised or unsupervised learning goes one step further. Here, unlabeled data is used. Unlike supervised learning, only input variables are used here, but no correct answers are given. The algorithm must figure out what is being displayed. The goal is to explore the data and find hidden patterns and intrinsic structures in the data. The computer is given the freedom to find patterns and associations at will, often leading to results that may not have been visible to a human data analyst.

The most common method of unsupervised learning is clustering, which is used for exploratory data analysis to find hidden patterns or groupings in data. Applications for cluster analysis include gene sequence analysis, market research, and object recognition.

What is Reinforcement Learning?

Reinforcement Learning is the training of ML models to make a sequence of decisions. In reinforcement learning, the computer tries to find a solution to the problem via trial and error. In the process, the AI receives either rewards or penalties for the actions it performs. It is up to the model to figure out how to perform the task in order to maximize the reward, ranging from completely random trials to sophisticated tactics.

By leveraging the power of search and many trials, reinforcement learning is currently the most effective way to identify machine creativity. Unlike other machine learning techniques, reinforcement learning is not constrained by human-labeled data. This makes reinforcement learning suitable for domains where training data is unavailable, scarce, or constrained by legal restrictions.

What is Machine Learning used for?

ML is something we encounter every day. Google, for example, uses ML to filter out spam, malware, and phishing emails from inboxes. Banks and credit card institutions use ML to generate alerts about suspicious transactions on customer accounts. For Siri and Alexa, machine learning drives speech and voice recognition platforms. And medicine is already using ML to scan X-rays and blood test results for abnormalities such as cancer.

ML can automate a variety of routine tasks. ML helps automate and create accurate and scalable models for data analysis. ML is now used in virtually every industry - from searching for malware to weather forecasting to stock brokers searching for optimal trades.

From speech recognition to mood analysis

A common application is also facial recognition for unlocking the smartphone or for security purposes such as identifying criminals or searching for missing persons. Automatic speech recognition is also a playground of ML, for example, to convert speech into digital text, in user authentication of users based on voice, and in the execution of tasks based on human speech input.

Combined with Natural Language Processing (NLP), Machine Learning enables computers to enable, interpret and process seamless interaction between humans and technology, or between spoken and written language. Especially complex workflows with chatbots or virtual assistants (VAs) benefit from this technological marriage, as not only tasks can be executed, but also adequately communicated while learning from data sets to make even better decisions and provide appropriate services. Computer vision tools such as Optical Character Recognition (OCR) are the little brother here, converting scanned documents or photos into text.

In financial services, ML is suitable for fraud detection by monitoring each user's activity and assessing whether an attempted activity is typical for that user or not, or for detecting money laundering activities. ML improves lead scoring algorithms by incorporating various parameters such as website visits, emails opened, downloads, and clicks to score each lead. ML enables sentiment analysis to measure consumer response to a particular product or marketing initiative. Very recently, scientists are using ML to predict epidemic trajectories and outbreaks.

And what is Deep Learning now?

Deep Learning is a subset of Machine Learning based on artificial neural networks. While in ML the abilities of computers to perform complex tasks still fall far short of what humans are capable of, Deep Learning relies on algorithms that analyze data with a logical structure, similar to how a human would draw conclusions. To do this, DL structures the algorithms in multiple layers to create an artificial neural network (KNN) that can learn from massive amounts of data and make intelligent decisions on its own. Just as humans use the brain to recognize patterns and classify different types of information, DL can be taught to perform the same tasks with data.

How does Deep Learning work?

Deep Learning models involve the construction of complex, multi-layered neural networks. These KNNs are inspired by the biological neural network of the human brain. This means that the learning process of DL is far more powerful than that of standard machine learning models. A KNN consists of several layers, called layers: an input layer, an output layer, and the middle layers, also known as hidden layers.

A layer is a variable that can adapt to the characteristics of the data it is trained with, enabling it to perform tasks such as classifying images and converting speech to text. The more hidden layers a network has between the input and output layers, the deeper it is. In general, any KNN with two or more hidden layers is called a deep neural network.

Neural networks learn by themselves

In a neural network, only the starting point and the desired result are entered. The network then learns on its own. By allowing the network to learn on its own, there is no need to input all the rules. Only the architecture of the neural network is created. Once the system is trained, it can be shown a new image, for example, and it will be able to distinguish and classify the image.

Convolutional Neural Networks

Convolutional Neural Networks (CNN) are specially designed algorithms that take an input image, assign meaning to different aspects and objects in the image, and can also distinguish between them. The architecture of a Convolutional Neural Network is comparable to the connectivity patterns of neurons in the human brain. Here, a filter is applied to each element of an image, helping the computer to understand and respond to elements within the image itself. This technique is useful when scanning a large number of images for a specific element or feature, such as finding an object on the ocean floor or identifying the face of a single person in an image of a crowd.

Recurrent Neural Networks

Recurrent Neural Networks (RNN) introduce a key element that is missing in simpler algorithms: memory. RNN relies on sequential data or time series data. Recurrent Neural Network remembers the past and decisions are influenced by what it has learned from the past. The computer is able to "remember" past data points and decisions and take them into account when reviewing current data. These DL algorithms are often used in ordinal or temporal problems, such as language translation, natural language processing (NLP), or speech recognition, and can be found in popular applications such as Siri or Google Translate.

What is Deep Learning used for?

DL has a wide range of applications. In automated driving, it recognizes objects such as STOP signs or pedestrians. Home assistance devices such as Alexa and Siri also rely on Deep Learning algorithms to respond to the user's voice and know their preferences. Without Deep Learning, there would be no chatbots, Google Translate would be a rudimentary translation engine, and the streaming service Netflix would have no idea which movies or TV series to suggest.

In agriculture, DL can be used to optimize yield production by using data from sensors and satellites, taking temperature and humidity into account, to make predictions. In sales and marketing, potential customers most likely to buy the solution can be identified. In brand protection, logo and product counterfeiting can be detected online. DL enables companies to run data-driven predictive advertising, real-time advertising or targeted display advertising. In the gas and oil industry, DL can extract insights from data previously hidden to achieve key goals such as seismic modeling, automated well scheduling, machine failure predictions, and supply chain optimization.

Machine Learning and Deep Learning

The terms Machine Learning and Deep Learning are often used interchangeably. Nevertheless, there are some distinguishing features. In contrast to machine learning, deep learning is still a young subfield of artificial intelligence based on artificial neural networks. Machine Learning is about enabling computers to think and act with less human intervention. Deep Learning is about computers learning to think using structures that mimic the human brain.

In a Deep Learning model, the feature extraction step is unnecessary. Even without the help of humans, this model can recognize the unique characteristics of a car and make a correct prediction in a given situation. Machine learning, on the other hand, requires continuous human intervention to produce results. Deep Learning is more complex to set up, but requires minimal human intervention afterward. Because ML programs are less complex than Deep Learning algorithms, they can be run on traditional computers.

Deep Learning, on the other hand, requires much more powerful hardware and resources. ML models can be set up and run quickly, but the expressiveness of their results is limited. In contrast, DL systems require more effort to set up, but then deliver results immediately. While ML deals with structured data and uses traditional algorithms such as linear regression, DL works with neural networks and was developed specifically to process large amounts of unstructured data.

Conclusion

Innovative technologies are being integrated into our everyday lives every day. At the same time, the demands that consumers place on companies' services and applications are growing. To keep up with consumer expectations, companies are increasingly relying on learning algorithms to make things easier. You don't need to be able to read coffee grounds to predict that machine learning and deep learning are currently the most effective AI technologies for numerous applications, and that they will change our lives and those of the next generation in ways that were impossible decades ago.

But that's not all. In the coming years, technology research will develop and refine learning methods and models that can more closely mimic human behavior. AI technologies like these are also an important part of automating processes. They make up the "hyper" in Hyperautomation and the "intelligent" in Intelligent Automation.