In our private, as well as business world, everything now revolves around automation, from email filters to voice assistants, text prediction, search results to data and text analysis. With the advent of Google as the leading search engine, the increasing digitization of our world, and our increasing employment, Natural Language Processing (NLP) has also crept into our lives almost unnoticed.

Without NLP, the main branch of Artificial Intelligence (AI), innovative business process automation would not be possible. Natural Language Processing enables companies to understand unstructured data and gain valuable insights to improve their decision-making processes. Companies are using Naturaal Language Processing as part of the Digital Transformation to automate routine tasks, reduce time and costs, and ultimately become more efficient.

Natural Language Processing (NLP)

Natural Language Processing is a field of Artificial Intelligence in which computers intelligently analyze and understand human speech and infer meanings, as well as the speaker's intentions and feelings, so that repetitive tasks can be performed automatically. Essentially, Natural Language Processing is about understanding, interpreting and mimicking the complexity of our natural, spoken language.

Advantages of NLP

Business data contains a wealth of valuable insights. In most cases, it is unstructured data. Natural Language Processing has the potential to improve data analysis and extract key insights from data faster. NLP solutions are able to speed up business processes by automating the review of contracts. Natural Language Processing increases the efficiency of documentation processes and the accuracy of documentation.

Overall, Natural Language Processing scores with more efficient workflows, reduced costs, higher customer satisfaction and improved analytics. NLP solutions work around the clock, always applying the same criteria to the data to ensure that results are always accurate and consistent. NLP-based chatbots can help dramatically reduce the cost of manual and repetitive tasks. Because they understand the intent and context of a user request and can serve multiple customers at once, customer wait times are reduced and service quality increases.

Natural Language Processing: How it works

Natural Language Processing breaks down human speech - based on so-called text or sound data sets - into fragments so that the grammatical structure of sentences and the meaning of words can be analyzed and understood in context. This helps computers read and understand spoken or written text in the same way as humans. Natural Language Processing uses two different algorithm approaches to do this.

The rule-based approach, the earliest approach to NLP algorithm development, makes use of grammatical rules created by linguistics experts. The machine learning (ML) approach is based on statistical methods. The Machine Learning algorithms have the ability to learn on their own and perform tasks automatically after being fed with Data Sets (Text Data, Sound Data). No manual rules are required. By the way, Machine Learning can be fed with Signal Data, Physical Data, Biological Data, Anomaly Data and more, apart from Natural Language Processing with its focus on Text and Sound Data Sets.

Combining Machine Learning and Deep Learning (DL) models with Artificial Intelligence (AI) enables Natural Language Processing to automatically extract, classify, and label elements from text and sound data sets and assign a statistical probability to each possible meaning of those elements.

NLP systems based on learning techniques such as the Convolutional Neural Network (CNN) and Recurrent Neural Network (RNN) learn as they go and can extract increasingly accurate meanings from vast amounts of raw, unstructured, and unlabeled text and sound data sets. For a holistic analysis of text meaning, it is important to collect large amounts of data upfront and use already known patterns for meaning analysis. In addition to Artificial Intelligence, Machine Learning and Deep Learning, Big Data techniques also play a major role.

Techniques

The most important techniques to process natural language are syntactic analysis and semantic analysis. They are key to understanding the grammatical structure of a text and identifying the relationship between words in the text context.

Syntax analysis

Syntax analysis determines the meaning of words by looking at the grammar behind a sentence. It is the process of structuring the text using grammatical conventions of the language. Algorithms analyze sentences by dividing them into groups of words and phrases and deriving meaning from them.

The syntax techniques used include

- lemmatization, which takes into account the morphological analysis of words by having the algorithm search detailed dictionaries in order to link the word back to its lemma. A lemma is the base form of all its inflectional forms.

- reduces the various inflected forms of a word to its base form, also known as a "lemma," for easy analysis.

- morphological segmentation, in which words are divided into individual units called morphemes.

- word segmentation, in which a continuous text is divided into different units.

- the word type tagging, which identifies the word type for each word.

- parsing, which involves dividing a sentence into its constituent parts to find out its meaning. By examining the relationships between specific words, algorithms are able to accurately determine their structure.

- the sentence break that sets sentence boundaries in a large piece of text.

- stemming, in which the end or the beginning of the word is truncated considering a list of common prefixes and suffixes that can be found in an inflected word.

Semantic analysis

Semantic analysis uses algorithms to try to understand the meaning and interpretation of words and the structure of sentences. To do this, it makes use of various techniques. Named Entity Recognition (NER) is used to determine the parts of a text that can be identified and classified into preset groups (e.g., names of people or places). Word sense term clarification is about giving meaning to a word based on context. Natural language generation uses databases to infer semantic intent and convert it into human language.

Natural Language Processing: Application

NLP is behind a lot of tools and software we use every day today. Without Natural Language Processing, there would be no voice-controlled GPS systems, no digital assistants, no speech-to-text dictation software, and no customer service chatbots.

Better than a human with Big Data

Speech recognition (speech-to-text) deals with the conversion of spoken language into text data. Natural Language Processing analyzes speech patterns, meaning, relationships and classification of words and assembles the statements into a complete sentence. DL algorithms are even able to detect accent or speech impairments more accurately.

What makes speech recognition using the speech-to-text approach particularly difficult is the way people accent words. We use homonyms, synonyms, irony and sarcasm, informal phrases, idioms, culture- and domain-specific jargon, lexical, syntactic, and semantic ambiguities. All these variations of natural language sometimes even cause comprehension problems for humans. However, with the help of Big Data combined with Artificial Intelligence, even these could be eclipsed at the comprehension level in the future.

Applications that NLP makes possible

Search engines use Natural Language Processing to deliver relevant search results based on similar search behavior or user intent. Spell checking enables everyone in the organization to create grammatically correct and error-free content. The best spam detection technologies work with NLP to scan emails for indicators such as overuse of terms and phrases that often indicate spam or phishing.

Recognize emotions in text

Sentiment analysis is a contextual text mining technique that identifies and extracts subjective information in source material. This has made NLP an indispensable tool for analyzing social media posts for attitudes and emotions in response to products and the corporate brand, for gaining insights into how customers perceive their brand, and for using the insights gained for product design and advertising campaigns.

Google has been using Natural Language Processing for a long time

A well-known example of the use of NLP technology is also Google Translate. This is not just about replacing words in one language with words in another, but also about effective translation that captures the meaning and tone of the input and renders it with the same meaning and desired effect. Virtual assistants and chatbots from Siri to Alexa to Google Assistant work on the basis of Natural Language Processing to recognize patterns in voice commands and generate natural language for responses and comments. Natural Language Processing can digest large volumes of digital text and generate summaries for indexes, research databases, or busy readers.

From text classification to intent recognition

Customer service automation supported by Natural Language Processing covers a range of processes, from routing tickets to the most appropriate agent to using chatbots to resolve frequent queries. To do this, Natural Language Processing makes use of text classification and topic classification, which assigns predefined tags to a text based on its content to identify the frequency of certain topics and terms across different stakeholder groups. Intent detection has the purpose of identifying the goal or intent behind a text. Text extraction provides the ability to automatically summarize text or find important information.

How does NLP fit into process automation?

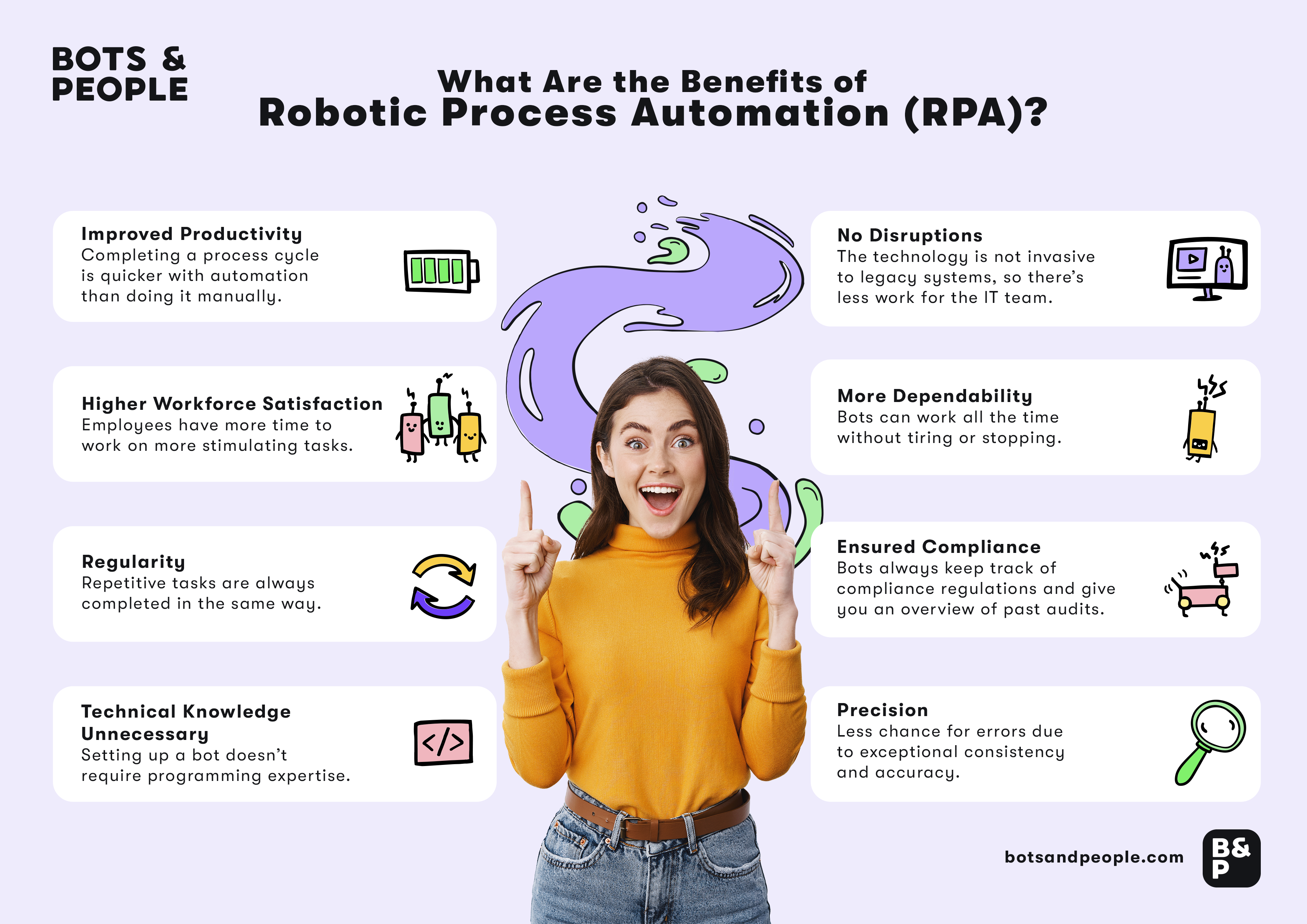

Robotic Process Automation (RPA) and other Business Process Automation (BPA) technologies are now permanent fixtures on the digitization agenda of companies. Extracting information from unstructured information sources, for example, is a common task in countless business scenarios, but one that RPA, iPaaS and co. still fail at today. If these technologies want to continue to successfully defend their places in the automation world in the coming years, this will depend above all on the integration of cognitive components with higher levels of intelligence.

NLP solutions have proven to be a mature technology to solve typical problems in extracting insights from unstructured data in a convenient, fast and cost-effective way. With Natural Language Processing and Artificial Intelligence, RPA and co. become intelligent process automation, also called Intelligent Process Automation (IPA), and thus support companies in being able to automate processes sustainably.

Text content automation

Contracts are examples of unstructured content. An RPA bot could automatically extract the content of a contract that arrives as an attachment in the company's inbox and then pass it to an NLP tool. Here, the complex data such as contracting parties, the terms of specific clauses, and those affected in a legal action are extracted. After the NLP tool recognizes all relevant information regarding parties, date, term, assignment, change of control, audit, governing law, force majeure, indemnification, limitation of liability, etc., the RPA bot takes the information and automatically inserts it into the ERP.

Natural Language Processing based on Machine and combined with Artificial Intelligence extends the possibilities of automation to textual content that requires interpretation. In the context of RPA, the most interesting applications seem to be data scraping, data quality assessment and data quality improvement.

Conclusion

Natural Language Processing is one of the most promising areas of Artificial Intelligence that will permanently change the way humans and machines interact in the coming years. Today, companies are already using Natural Language Processing to automate everyday processes and gain new insights from unstructured data. Every day, we use a variety of applications that have NLP behind them, such as chatbots or the Google search engine. And considering that the amount of unstructured data will continue to increase, it is easy to appreciate the importance of Natural Language Processing for our private as well as economic lives. Ever more sophisticated algorithms will deliver ever more accurate results and influence more and more aspects of our lives.

%20and%20Business%20Process%20Management%20(BPM)-%20What%20is%20the%20difference%3F.png)